But I can understand why these programs may take longer to release a native ARM version. However, that doesn't mean that the companies are not dragging their feet compared to others that have made the transition with equally complex and mission critical programs. It takes time and effort plus since they're "mission critical" for the people that use them, they shouldn't be buggy, that's why they take a bit longer to transition to a new architecture. Sometimes the vendor just bites the bullet and rewrites the program from the ground up since the existing version is too crufty to reliably port over and is more costly/difficult to maintain in the long run. Large programs like Visual Studio and Maya are complex, full of developmental cruft collected over the years of incremental development, so are not easy to port over to a whole new architecture while maintaining the same behavior. For Docker-on-M1 to have been taken out of preview, it should have been possible to say confidently "a container running on M1 will behave identically to that container running on any other host" - this is not currently true. Given that the entire point of using containers is repeatability, I find this kind of failure particularly insidious. Performance remains atrocious, and while I haven't actually got the kernel to blow up entirely yet it took all of five minutes for me to get a "known good" container to fail with (signal: 11, SIGSEGV: invalid memory reference). So, not wanting to slander them in case things had improved dramatically since I last tried, I just updated to the latest release of Docker for M1 and - yeah, err, no, still broken. The docker client is fine, so if you can offload to a docker host running on supported hardware all is well - but if you expect to build/run containers locally. Last time I checked even Arm images ran in emulation I'll give it another go this afternoon and check if that's still true. You can crash the Linux kernel pretty much on-demand, and performance is a dog. I’m sure this setup will change again, but I’m pretty happy with it for now.except it's a buggy mess unsuitable for for real world use, and Docker should be ashamed of themselves for taking the "preview" label off before it was ready.

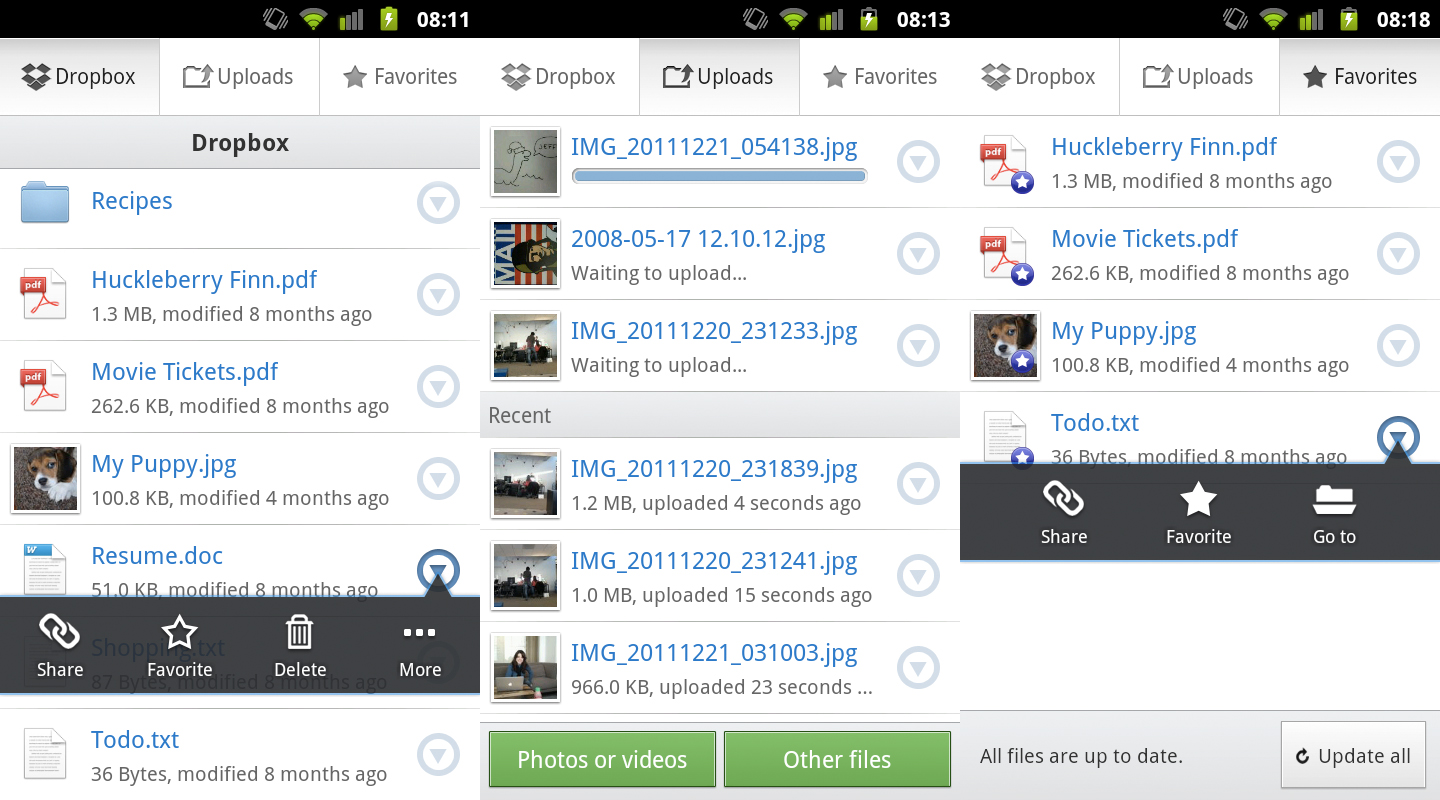

The superduper clone is just a last chance backup incase of disk failures. Dropbox will soon come with selective sync which I am anxiously awaiting. So if you decide you don’t want something on one machine and you delete it then it gets deleted on every machine that is using dropbox. The one way sync (nothing is deleted) with chronosync is an important piece since if you delete something on one dropboxed client then that deletion is instantly propagated everywhere. The dropbox clients are faster at syncing between machines when compared to jungledisk’s sync feature. Dropbox does this cool thing where if you try to upload a file that is already on their system it won’t actually push the bits, instead it will point to the existing copy or make a duplicate internally at dropbox (not sure what actually happens, but it doesn’t matter the point is that you don’t have to upload that file). Dropbox is significantly faster at uploading (at least at my house).

I just recently switched my offsite backup from s3/jungledisk to dropbox. External drive 1 is cloned to external drive 2 via superduper weekly.Everything is one way synced to external drive 1 via chronosync nightly.Everything except music and dvds are synced with dropbox.

The master copy is stored on my iMac’s local disk.I’ve gone through many variations of my backup strategy since I started storing things digitally.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed